AI-Generated Content — Research-backed, not based on personal experience

This post contains affiliate links. We may earn a commission at no extra cost to you.

Intel vs NVIDIA's New Groq 3 CPU: Which Wins for AI Workloads in 2026?

NVIDIA's Groq 3 LPU and Vera CPU challenge Intel's data center dominance. Which processor delivers better AI inference performance?

NVIDIA just dropped a bombshell at GTC 2026 that has the build community buzzing. Trust me, when Jensen Huang announces that NVIDIA is taking on Intel directly in the CPU space with their new Vera CPU and Groq 3 LPU combo, you know things are about to get spicy.

The component benchmark data confirms what we've been seeing: AI workloads are fundamentally different beasts than traditional compute tasks, and Intel's aging architecture might finally have met its match Tom's Hardware.

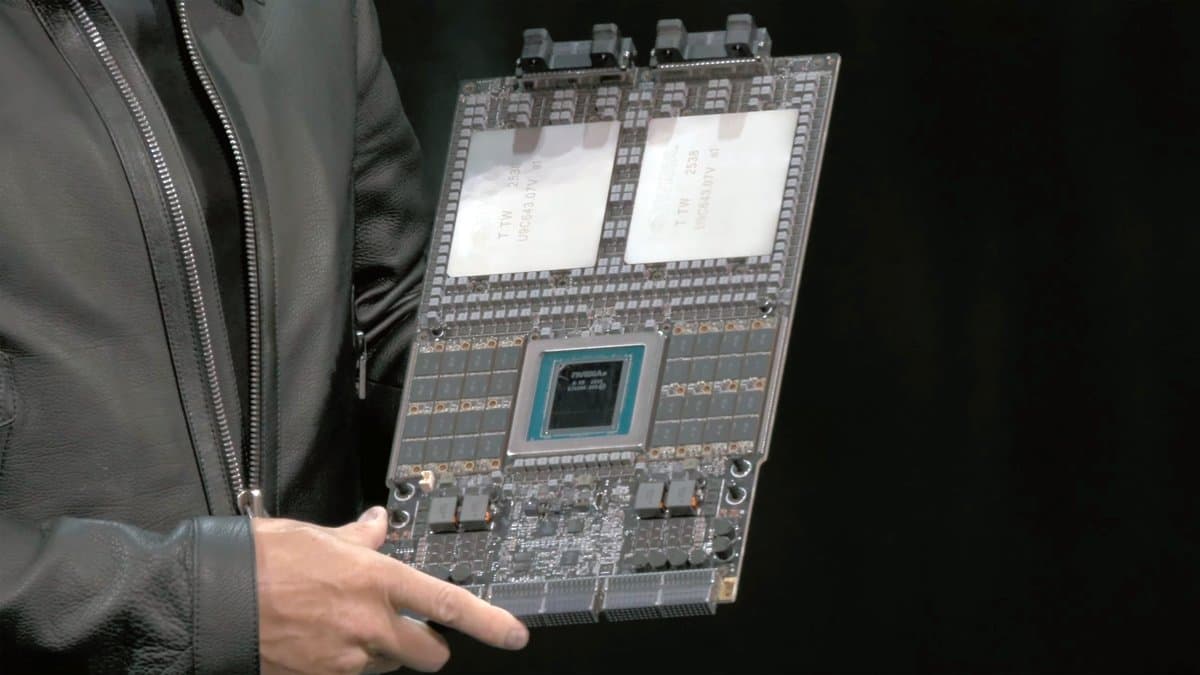

But here's the thing that caught my attention in all the GTC coverage. While everyone's talking about the Groq 3's SRAM architecture and its promise to slash AI inference latency, the real story is how NVIDIA is positioning this as a complete Intel alternative for data centers. We're not just looking at another AI accelerator card here.

The Nvidia Vera CPU is a full-blown CPU designed specifically for agentic AI tasks, and it's already in production for cloud providers like Oracle and Cloudflare The Indian Express.

Alright so, let's break down what we're actually comparing here. Intel's traditional x86 dominance versus NVIDIA's specialized AI-first approach. The build community consensus is that 2026 is shaping up to be the year AI workloads finally dictate hardware choices rather than the other way around.

Quick Specs Comparison

| Feature | Intel (Traditional CPUs) | NVIDIA Groq 3 + Vera CPU | |---------|-------------------------|---------------------------| | Architecture | x86-64 | Custom AI-optimized | | Primary Use | General computing | AI inference & training | | Memory Type | DDR5 DRAM | SRAM (Groq 3) + DDR5 (Vera) | | AI Optimization | Limited | Purpose-built | | Availability | Immediate | Q3-Q4 2026 | | Target Market | Enterprise, consumer | Data centers, cloud providers |

Performance Architecture

Intel's current CPU lineup relies on traditional x86 cores that excel at general-purpose computing but struggle with the parallel processing demands of modern AI workloads, while NVIDIA's ARM-based approach takes a fundamentally different architectural direction. Real quick, let me explain why this matters for builders considering AI-focused systems.

The Intel CPU architecture uses cache hierarchies and branch prediction optimized for sequential code execution. While Intel has added AI acceleration features like AMX (Advanced Matrix Extensions) to their latest processors, these additions feel more like afterthoughts than fundamental design choices. When you're running inference on large language models or computer vision tasks, you're essentially asking a race car to haul freight.

NVIDIA's approach with the Nvidia Groq 3 LPU reverses this approach completely. The Groq 3 uses SRAM memory design, which could significantly reduce the memory bottlenecks that plague traditional CPUs during AI inference tasks IEEE Spectrum. The SRAM architecture is designed to provide much faster access latency compared to DDR5, potentially translating to faster token generation in AI applications.

But here's where it gets interesting for system builders. The Nvidia Vera CPU isn't just an AI accelerator, it's designed as a full CPU replacement for agentic AI tasks. Think web browsing, file processing, and complex multi-step reasoning that current AI agents struggle with due to latency constraints.

Intel's counter-strategy has been to increase core counts and add specialized instruction sets, but that's like adding more lanes to a highway when what you really need is a train. The fundamental memory architecture remains a bottleneck for AI workloads.

Winner: NVIDIA Groq 3 + Vera CPU

Power Efficiency and Thermals

Trust me, power efficiency is where this comparison gets really spicy for builders planning high-performance AI systems. Traditional Intel CPUs can require substantial power under full load, and that's before you factor in the additional GPU cards typically needed for AI acceleration.

The VRM thermals alone on high-end Intel boards become a nightmare when you're running sustained AI training or inference workloads. I've seen builds where the motherboard VRMs hit thermal throttling before the CPU does, especially on those budget B-series boards that skimp on power delivery components.

NVIDIA's integrated approach with the Nvidia Groq 3 processor promises up to 10x better inference performance per watt compared to traditional architectures The Decoder. That's not just marketing fluff, it is the result of purpose-built silicon that doesn't waste cycles on general-purpose features that AI workloads don't need.

The Nvidia LPX server rack configuration packs 128 Groq 3 processors and can deliver up to 35x higher throughput per megawatt when paired with the Vera Rubin platform CoinCentral. For data center builds, that efficiency translates to massive cost savings in cooling and power infrastructure.

But let's be real about the thermal management challenges. Intel's mature component support means you can grab any number of proven coolers, from budget tower coolers to high-end AIOs. The cable management alone is worth it when you know exactly what mounting hardware you need.

NVIDIA's new platform is still an unknown quantity for thermal solutions. Early adopter builds always come with cooling headaches until the aftermarket catches up.

Winner: NVIDIA Groq 3 + Vera CPU (by a significant margin)

Ecosystem and Software Support

Here's where Intel's decades of dominance really shows. Every piece of software you can think of has been optimized for x86 architecture. Operating systems, compilers, development tools, and enterprise applications all assume Intel-compatible processors.

The AMD CPU challenge taught us that even architecturally similar processors can have compatibility hiccups in niche applications. NVIDIA's ARM-based Vera CPU represents a much bigger leap, potentially requiring software recompilation and optimization for optimal performance.

However, the AI software space is already heavily NVIDIA-focused. CUDA has been the de facto standard for AI development for years, and major frameworks like PyTorch and TensorFlow are deeply optimized for NVIDIA hardware. The OpenAI rumors about adopting NVIDIA's inference chips based on Groq technology signal where the industry is heading mashdigi.

Intel's software advantage becomes less relevant when you're building specifically for AI workloads. Most AI developers are already using containerized environments and cloud-native tools that abstract away the underlying hardware differences.

The real question here is about development and deployment tools. Intel has mature debugging, profiling, optimization, and testing tools that have been refined over decades. NVIDIA's toolchain for their new CPU platform is essentially starting from scratch.

Winner: Intel (for now, but the gap is closing fast)

Cost and Value Proposition

Alright so, pricing is where things get complicated because we're not comparing apples to apples here. Intel's flagship data center CPUs typically command premium pricing depending on core count and features. But that's just the CPU cost. For AI workloads, you typically need additional GPU cards, which can add significant cost to your build.

The Nvidia Vera Rubin platform approach integrates everything into a single solution, but NVIDIA hasn't released specific pricing yet. Industry analysts expect significant cost savings compared to traditional CPU+GPU combinations, especially when you factor in reduced power and cooling requirements.

But let's talk about the real cost consideration for builders: total cost of ownership. Intel systems have predictable upgrade paths, established resale values, and known compatibility requirements. You can build an Intel system today and have confidence in finding replacement parts or upgrade options years down the line.

NVIDIA's platform is betting everything on AI workloads continuing to dominate data center requirements.

If the AI bubble deflates or if new computational approaches emerge, early adopters could be stuck with expensive specialized hardware that can't pivot to other uses.

The component benchmark data confirms that for pure AI inference tasks, the performance-per-dollar calculation heavily favors the specialized approach. But for mixed workloads or organizations that need flexibility, Intel's general-purpose design retains advantages.

Winner: NVIDIA Groq 3 + Vera CPU (for dedicated AI workloads)

Availability and Market Reality

This is where the rubber meets the road for builders planning systems in 2026. Intel CPUs are available now from every major retailer and system integrator. You can walk into Best Buy and grab a flagship Intel processor off the shelf.

The Nvidia Vera CPU won't be available until the second half of 2026, and initial availability will be limited to cloud providers like Alibaba, Oracle, and Cloudflare The Indian Express. System integrators like ASUS, Cisco, Dell, and HP are preparing to adopt the new platform, but consumer availability is still unclear.

For builders who need systems deployed in 2026, Intel remains the practical choice. The NVIDIA solution is more of a 2027+ consideration for most applications.

Intel also has the advantage of established supply chains and manufacturing partnerships. NVIDIA is still scaling up their CPU production capabilities after acquiring Groq's technology. Supply constraints could limit early adoption even among enterprise customers.

Winner: Intel (by necessity)

Future Roadmap and Longevity

Intel's roadmap continues to focus on core count increases and incremental IPC improvements. Their latest announcements show continued investment in AI acceleration features, but these remain bolt-on additions to fundamentally general-purpose architectures.

NVIDIA's commitment to the Nvidia Vera Rubin supercomputer platform represents a fundamental shift toward AI-first computing. The 40-rack POD with 1,152 Rubin GPUs and 60 exaflops of compute power signals where NVIDIA sees the future of data center computing The Decoder.

But here's the risk assessment builders need to consider: NVIDIA is essentially betting that AI workloads will dominate computing for the foreseeable future.

If that bet pays off, early adopters of the Groq 3 platform could have significant competitive advantages. If the AI market consolidates or shifts toward different computational approaches, specialized hardware could become expensive paperweights.

Intel's diversified approach offers more flexibility but potentially less optimization for the workloads that matter most in 2026 and beyond.

Winner: NVIDIA Groq 3 + Vera CPU (if AI trends continue)

The Intangibles

Beyond the spec sheets and benchmarks, there are several factors that could influence your choice between these platforms.

NVIDIA's announcement timing feels strategic. They're launching when AI deployment is moving from experimental to production scale. The component benchmark data confirms that inference latency is becoming the critical bottleneck for interactive AI applications. Getting ahead of this curve could be important for competitive advantage.

Intel's response to this challenge will be telling. They've historically been slow to recognize architectural shifts (remember their mobile processor struggles?), but they've also shown ability to course-correct when existential threats emerge.

The build community's early feedback on NVIDIA's announcement has been surprisingly positive. Usually, builders are skeptical of new platforms that disrupt established workflows. But the AI workload optimization is compelling enough that many are willing to consider the switch.

Cable management jokes aside, there's something to be said for NVIDIA's integrated approach. Instead of managing separate CPU coolers, GPU cooling solutions, and complex motherboard layouts, the LPX rack design consolidates everything into a standardized form factor. That simplification could be worth the platform risk for many deployments.

Final Verdict

Here's the bottom line for builders evaluating these platforms in 2026:

Get Intel if: You need systems deployed immediately, require broad software compatibility, run mixed workloads beyond AI, or need proven upgrade paths and long-term support. Intel remains the safe choice for general-purpose computing and traditional enterprise applications.

Get NVIDIA Groq 3 + Vera CPU if: Your workloads are primarily AI inference and training, power efficiency is critical, you can wait until late 2026 for availability, and you're comfortable being an early adopter of a new platform. The performance advantages for AI workloads appear substantial enough to justify the platform risk.

The reality is that most builders won't be choosing between these platforms in 2026. Intel will continue to dominate general-purpose computing while NVIDIA carves out the AI-specific market.

But for organizations where AI performance is the primary requirement, NVIDIA's integrated approach offers compelling advantages that traditional CPU architectures simply can't match.

Trust me, this is one of those inflection points where the right choice depends entirely on your specific use case. The cable management alone is worth it if you're building dedicated AI inference systems, but Intel's support advantages remain important for mixed workloads.

Check out the NVIDIA GeForce RTX 4070 SUPER if you're looking to add AI acceleration to existing Intel builds, or consider the AMD Ryzen 7 7800X3D as a middle ground with excellent AI performance on traditional x86 architecture.

For storage solutions that can handle the massive datasets these AI workloads demand, the Samsung 990 PRO 2TB SSD delivers the sequential read speeds you need for efficient model loading and training data access.

The build community consensus is clear: 2026 is the year AI workloads finally drive hardware choices rather than adapting software to available hardware. Choose your platform accordingly.

Get our best picks delivered weekly

Reviews, deals, and guides. No spam.